Many pharma teams are not struggling with AI because the tools are poor.

They are struggling because AI is being used in the wrong place, in the wrong order, and with the wrong expectations.

That matters in pharma because content is rarely just a writing task. It sits inside a wider system of approved source material, promotional and non-promotional boundaries, HCP and patient audience separation, prescribing information, adverse event reporting, claims substantiation, medical review, regulatory review, signatory approval and commercial intent.

A prompt can produce a draft in seconds. That part is easy.

The harder part is knowing what the content is allowed to say, who it is for, what approved source it is based on, how claims will be substantiated, how it will move through review, and what should happen next if the content works.

When that thinking is weak, AI does not solve the problem. It simply makes the weakness faster.

The real problem is not the model

A lot of pharma teams are now using ChatGPT, Claude or other AI tools to speed up content production. They are refining prompts, creating internal templates and experimenting with brand tone, modular content and faster first drafts.

That can help.

But better prompts do not fix a weak content workflow.

If the process starts with “write me something about this therapy area” or “create an HCP campaign page from these notes”, the result may be fluent, polished and superficially usable.

But in pharma, polished is not enough.

The content still needs:

- a clearly defined audience

- a promotional or non-promotional classification

- approved source material

- claims that are accurate, balanced and capable of substantiation

- the right prescribing information and adverse event reporting requirements, where relevant

- clear separation between HCP, patient and public-facing content

- an agreed medical, regulatory and compliance review route

- a useful next step for the reader

When those things are missing, AI fills the gap with plausible language.

That is why so much AI-assisted pharma content sounds tidy but generic. It may read well, but still be commercially weak, hard to approve, difficult to repurpose and risky to rely on.

Why this happens

Most pharma teams are not using AI badly because they are careless.

They are using it badly because they are under pressure.

They need to produce more content, support more channels, localise assets, refresh websites, feed social channels, respond to internal requests and reduce long drafting cycles. AI looks like an obvious solution because it reduces the effort of writing.

But writing is usually not the main bottleneck.

In pharma, the real bottlenecks are more often:

- weak briefs

- unclear ownership

- poor source inputs

- mixed audiences

- uncertainty over promotional status

- medical and regulatory review friction

- late signatory concerns

- poor version control

- uncertainty about what the content is meant to achieve

If those issues are not resolved first, AI does not remove them. It just moves them further downstream, where they show up as rework, delay, inconsistency or bland output.

What weak AI use looks like in practice

A brand, medical or digital team wants an article, campaign page, email sequence, HCP resource or set of LinkedIn posts.

They start with a prompt before they have agreed:

- whether the content is promotional or non-promotional

- whether it is for HCPs, patients, carers, payers, the public or mixed audiences

- which approved references, SmPCs, clinical papers or internal materials can be used

- what claims are supportable

- whether prescribing information or adverse event reporting statements are required

- how the content fits with the wider brand, disease awareness or therapy-area strategy

- who needs to review it and at what stage

- where the reader should go next

The AI produces something clean and usable on the surface.

Then the problems begin.

The claims are too broad. The balance feels off. The audience is unclear. The content drifts towards promotion when it was meant to be educational. Reviewers are uneasy. The references do not support the wording closely enough. The content needs heavy editing. Medical, regulatory, brand and digital stakeholders pull it in different directions.

Eventually the conclusion becomes:

“AI content does not really work for us.”

That is usually the wrong conclusion.

A more honest conclusion is:

“We used AI to draft before we had defined the content workflow properly.”

The most common mistakes

1. Starting with the tool instead of the source

Strong pharma content usually starts with something real:

- an approved claim set

- a therapy-area insight

- an HCP question

- a patient-support need

- a medical education gap

- an objection from the field

- a review finding

- a product or pathway challenge

- a regulatory or access-related tension

- an approved core deck, SmPC, study or guidance document

When teams skip that and go straight to prompting, the output becomes generic very quickly.

AI is far better at shaping, structuring and adapting approved or expert-led material than inventing strong pharma content from nothing.

2. Treating output as strategy

Many teams use AI to generate isolated assets: a post, an email, a page section, a summary, a congress follow-up, a disease awareness article.

That can create activity, but not necessarily clarity.

A better approach is to start with one strong anchor asset built around a real audience need or commercial problem, then adapt it into other formats. For example:

- an HCP campaign page becomes email copy, rep follow-up copy and congress support content

- a disease awareness article becomes patient-facing web copy, social snippets and internal briefing material

- a medical education theme becomes a webinar outline, landing page and nurture sequence

- a product access message becomes a structured HCP resource hub

That creates more consistency, makes review easier and gives the content a clearer role.

3. Confusing faster drafting with lower-risk delivery

Speed is useful.

But faster drafting is not the same as lower-risk delivery.

In pharma, a fast first draft can still slow everything down if it creates more review burden, more uncertainty or more rewriting.

The real question is not:

“How quickly can we generate content?”

It is:

“Does this workflow help us create content that is accurate, balanced, useful, easier to review and more likely to move the right audience forward?”

4. Over-focusing on prompts and under-thinking governance

Prompting matters. Templates matter. Reusable instructions matter.

But they sit downstream of more important decisions.

Before the prompt, teams should already know:

- the audience

- the purpose

- the channel

- the source base

- the claim boundaries

- the review route

- the approval requirements

- the intended next step

Without that, prompt refinement becomes a way of polishing uncertainty.

5. Ignoring audience boundaries

This is one of the biggest pharma-specific risks.

A piece of content might be suitable for HCPs but not patients. It might work as non-promotional disease awareness but become problematic if the product connection is too strong. It might be acceptable in a gated HCP environment but not on an open public page.

AI will not reliably understand those boundaries unless the workflow makes them explicit.

That means defining upfront:

- who the content is for

- who must not be targeted

- where the content will appear

- whether the environment is gated or public

- what terminology is appropriate for the audience

- whether product references are permitted

- what review route applies

Without that clarity, content can become hard to approve and harder to govern.

6. Leaving content disconnected from a useful next step

A lot of AI-assisted content still ends with vague direction:

- learn more

- get in touch

- contact us

- visit our website

- read more

That is not enough.

Good pharma content should connect naturally to something useful and appropriate, such as:

- a relevant HCP resource

- a disease awareness page

- a product information page, where appropriate

- a prescribing information route

- a patient support resource

- a medical information contact route

- a webinar registration page

- a related article or educational asset

Content does not need to be aggressively sales-led. In pharma, it often should not be.

But it does need a reason to exist.

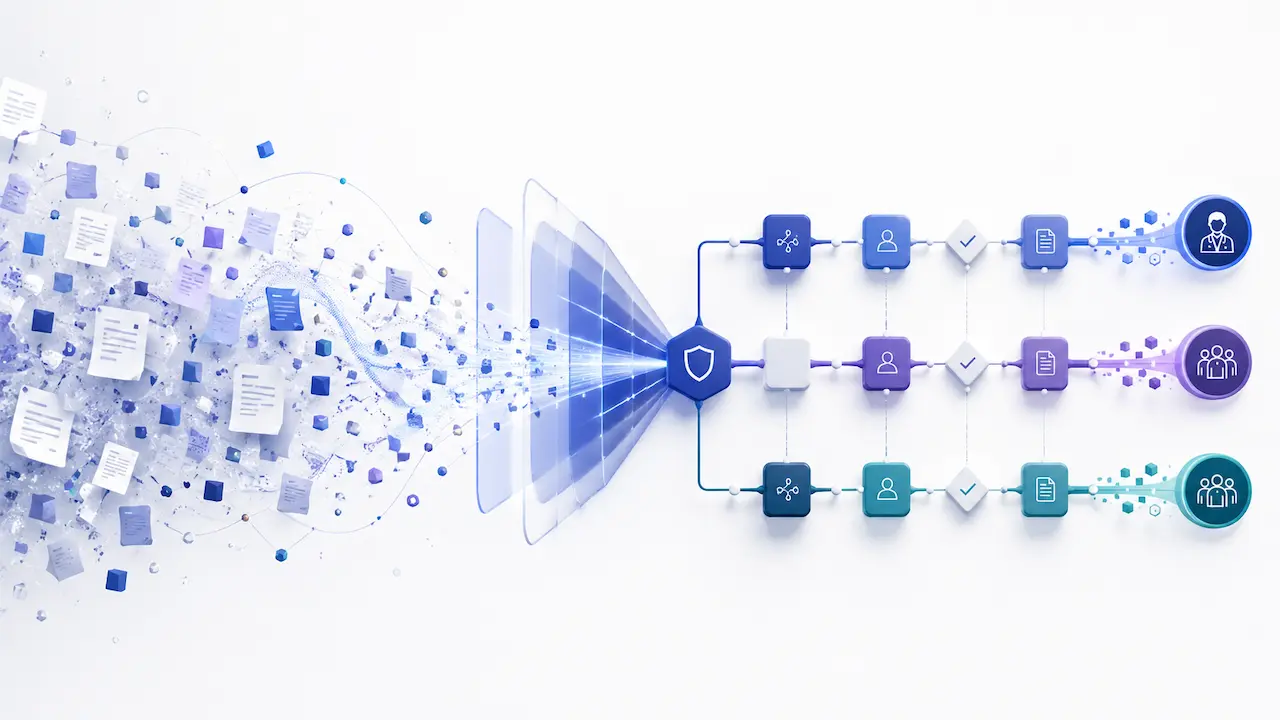

What better looks like

A better AI-assisted pharma content workflow is less glamorous and much more effective.

It usually looks something like this.

First, capture real inputs. That might include approved claims, SmPCs, clinical papers, field insights, medical review feedback, stakeholder questions, brand plans, patient-support needs, market access challenges or existing approved materials.

Second, define the job the content needs to do. Clarify the audience, channel, promotional status, scrutiny level, key message and commercial or educational purpose.

Third, identify the review route before drafting. Decide who needs to review the content, what they are reviewing for, and where AI can safely support the process.

Fourth, create one strong anchor asset grounded in approved or expert-led material. That might be a website article, HCP campaign page, disease awareness resource, webinar page, patient-support page or thought-leadership piece.

Fifth, use AI to help structure, adapt, summarise and repurpose that asset into other formats, rather than generating disconnected pieces from scratch each time.

Sixth, build governance into the workflow from the start. That includes source traceability, version control, approval status, claims support, audience separation and clear ownership.

Seventh, connect the content to a clear destination so visibility can become a meaningful next step.

That is where AI becomes genuinely useful.

Not as a replacement for medical, regulatory, commercial or strategic judgement, but as support inside a better-defined system.

Why this matters commercially

For pharma teams, the cost of weak AI use is not just mediocre copy.

It is:

- wasted medical and regulatory review time

- slower approval cycles

- inconsistent claims across channels

- poor reuse of approved content

- weaker HCP engagement

- poor patient or public-facing clarity

- lower confidence in the content system

- increased risk of content being used in the wrong context

- digital activity that looks busy but does little to build trust or support action

A better workflow can improve all of that.

It can help teams:

- turn approved source material into clearer digital assets

- make better use of internal medical and commercial expertise

- create more consistent content across web, email, social, congress and field channels

- reduce unnecessary redrafting

- support better review and approval discipline

- improve discoverability without weakening governance

- connect content to appropriate next-step action

That is a better commercial standard than simply producing more content, faster.

Final thought

If your AI-assisted pharma content feels generic, slow to approve or commercially weak, that does not necessarily mean the tool is the problem.

It may mean the workflow is.

AI is good at helping teams shape, structure, adapt and draft.

It is not a substitute for source material, audience clarity, claims discipline, medical judgement, regulatory review, approval governance or a clear digital journey.

Free Prompt Library

At Genetic Digital, this is how we approach AI-supported content work for pharma and regulated healthcare teams.

At Genetic Digital, this is how we approach AI-supported content work for pharma and regulated healthcare teams.

We do not see AI as a shortcut around strategy, source material, review or governance. We see it as a useful support tool when the workflow is properly defined.

That is why clients we work with also get free access to our practical AI prompt library, designed to help regulated teams brief, structure, review and repurpose content more effectively.

The prompts are not intended to replace medical, regulatory or commercial judgement. They are there to help teams use AI in a more controlled, consistent and useful way — with clearer inputs, better structure and less wasted effort.

Because in pharma, the real advantage is not just having access to AI.

It is knowing how to use it without weakening clarity, governance or trust.